Fraud doesn’t simply grow, it expands and becomes richer. New tactics emerge while foundations get updated and remain strong.

At Resistant AI, this has been our focus since 2019. We work to understand how fraud actually works. Instead of one-off solutions, we study evolution, always preparing for the next attack.

With our Global Document Fraud Report 2026 coming out last week, we thought this would be a great opportunity to look back at the evolution of fraud over the last few years.

We care about stopping crime, but we care just as much about understanding it: how criminals adapt, where trust breaks down, and why each new layer of innovation creates new opportunities for abuse.

Fraud’s upward trajectory

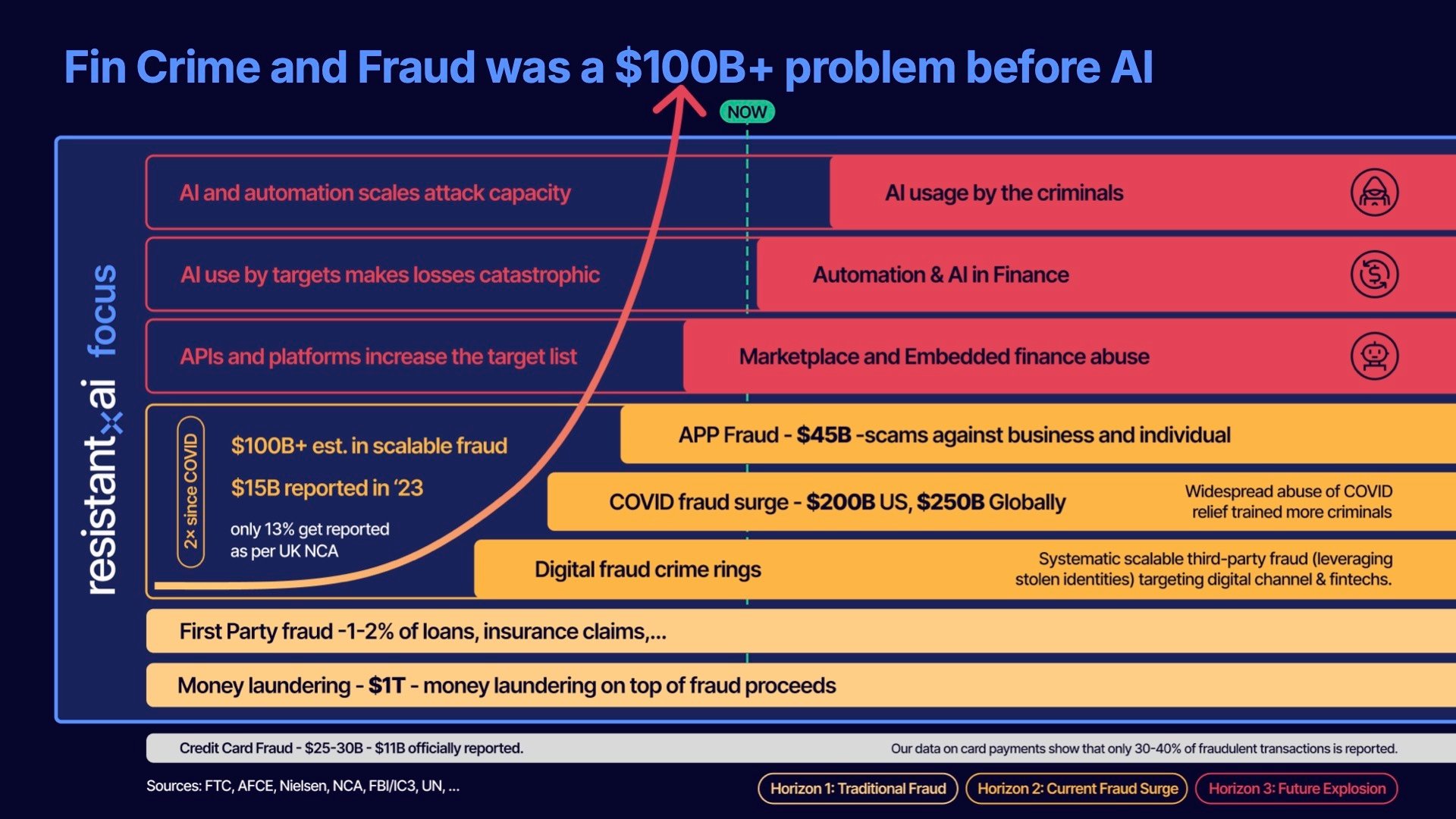

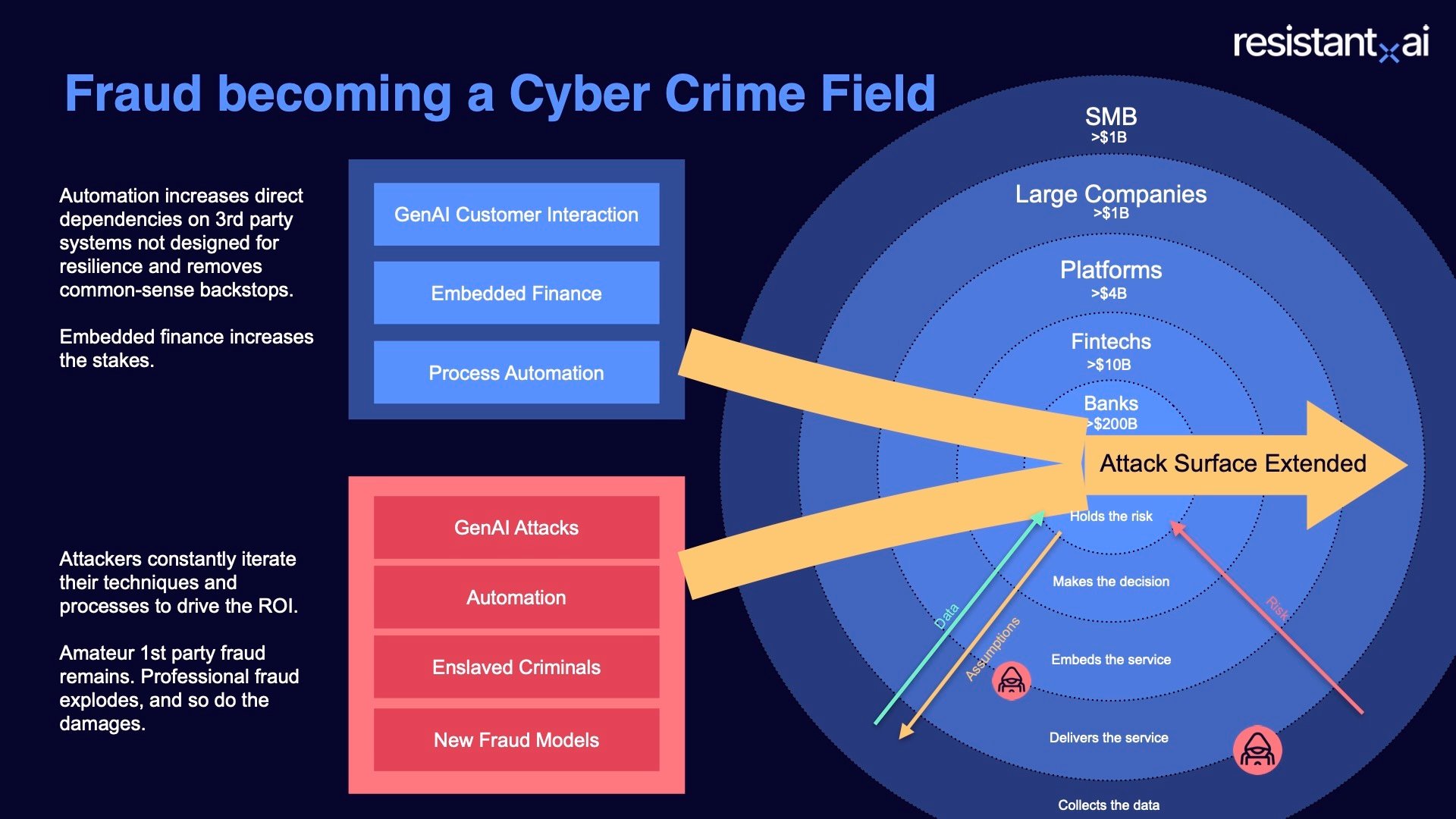

The infographic above tells the story of fraud’s upward trajectory through time, as a new tier emerges, the one beneath only becomes more complex and dangerous.

The key takeaway is clear:

The existence of fraud hasn’t changed, but the infrastructure that surrounds it has.

Fraud evolves alongside the systems it targets.

Foundations remain: money laundering networks, first-party fraud, credit card abuse. But markets digitize, platforms open APIs, onboarding becomes automated, all necessary developments from a competitive perspective, but also clear expansions of the attack surface.

New tactics and vulnerabilities start to spring up: digital crime rings, pandemic-era exploitation, large-scale authorized push payment scams, online document fraud templates, and countless other threats.

Now, AI only accelerates everything, increasing speed, scale, and quality, iteration after iteration, at a pace even legacy institutions struggle to keep up with.

Fraud is not static. But it also doesn’t happen in siloes or a vacuum.

Each layer begins as a symptom of its time and is then retooled for the next. We see recurring themes throughout the layers (underreporting of actual losses, people taking advantage of weaknesses and opportunities, etc).

But what happened on each individual layer? How did they change over time? And how can they inform us on building a more secure future?

What follows is an exploration of these topics. We’ll look at enduring foundations, how new opportunities expanded the surface for abuse, and the ways criminals have industrialized their methods.

Read on to learn more.

Money laundering and first-party fraud: The baseline that never went away

As we move through this piece, you’ll notice an underlaying, pre- and post-covid narrative. One of the primary catalysts to this shift is how many financial services moved online as a result of the pandemic.

Sure, digitization was already happening long before COVID-19 made it a necessity. But the world wasn’t yet the always-online economy we know today.

Many people hadn’t placed their first Amazon order, groceries were still bought mostly in stores, and opening a bank account usually meant showing up at a branch. Criminals were still using hand forged documents, impersonating people physically, running boiler-room call centers and skimming cards at ATMs and point-of-sale terminals.

Still, even against that backdrop, financial crime was already immense (a more than hundred-billion-dollar problem long before the first lockdowns happened in Winter 2020).

Before we discuss how much changed after the pandemic, we need to understand this fraud baseline in the pre-Covid world.

Outside the ever present threat of credit card fraud, two forces dominated the landscape: money laundering and first-party fraud. Let’s look at how they form that foundation and how they’ve evolved over the last few years.

Money laundering expanding

Money laundering has always been the foundation of financial crime. It’s not new, and it’s certainly not shrinking. Estimates put the scale at 2-5% of the global GDP, between EUR 715 billion and 1.87 trillion. That figure continues to grow as crime expands, but also as the definition of laundering has broadened.

Originally, it was tied to the war on drugs and the war on terror. The mechanics were consistent: placement, layering, and integration:

- Placement. Getting illicit money into the financial system.

- Layering. Moving it through transactions to hide its origin.

- Integration. Returning it as seemingly legitimate funds.

In practice, that typically meant funneling illicit cash into a business, disguising its source through transfers, shell accounts, or fraudulent transactions, and reintroducing it into the economy as if it were legitimate.

Old-school “car wash” operations still exist, trying to achieve placement and layering at the same time. But they are clumsy and hard to hide at scale (you’d have to wash every car in the tristate area to launder modern criminal proceeds).

More discreet examples endure too: in Montreal, rows of bars stay empty night after night, but the lights stay on because they function as fronts for groups like the Hells Angels. In parts of the UK, barber shops and small cafes stay open despite sparse foot traffic, and little to no customers.

Today the definition of money laundering covers wider topics:

.png?width=1538&height=1025&name=money%20laundering%20(expanded%20definition).png)

- Sanctions evasion. The deliberate concealment of parties, ownership, origin, or destination of funds to bypass economic or trade restrictions imposed by governments or international bodies.

- Online scams. Fraud schemes executed through digital channels (including business email compromise (BEC), phishing, impersonation, investment scams, and romance scams) that generate proceeds requiring laundering through financial and payment systems.

- Ransomware / digital extortion. The encryption or theft of data to demand payment (often via virtual assets), with proceeds subsequently layered and integrated into the financial system.

- Digital money trails. The increasing integration of digital banking, fintech platforms, underground banking networks, and crypto rails to move and obscure illicit online proceeds.

- Scam proceeds (APP & impersonation fraud). Funds obtained through authorized push payment fraud and social engineering schemes, where victims willingly transfer money that must then be rapidly dispersed and laundered.

Beyond the definition, what’s changed most is velocity. Digital transactions and instant payments have made laundering instantaneous. Take APP fraud for example, instead of that Nigerian Prince waiting three days for a transaction to clear, he can begin reclaiming his kingdom in just a few minutes.

And as soon as funds are stolen, the account they land in becomes a mule account, beginning the layering process immediately. The crime and the laundering blur into one:

Money moves and is already bouncing through mule networks before anyone notices. By the time a victim reports it, it is often gone for good. Criminals hiding aren’t just hiding illicit funds anymore, they’re stealing and hiding money all at the same time.

Recruitment has also evolved: Criminals flaunt stacks of cash on TikTok, pitching deals: sell us your phone and bank account, then report them stolen after a week.

In exchange: A payout up front and plausible deniability after.

These schemes show how laundering has become democratized. It’s not just the domain of drug cartels or terrorist networks, but the everyday people, coerced or incentivized.

In some extreme cases, entire underground financial ecosystems emerge. Triangular laundering schemes link Chinese brokers, Latin American cartels, and U.S. drug proceeds, using networks of money mules that include regular U.S. or Chinese passport holders.

The brokers arrange peso payments to cartels in Mexico while selling the dollars to Chinese nationals seeking to move money out of China.

The evolution of money laundering compliance

On the institutional side, the compliance landscape hasn’t always evolved at the same pace. Historically, many banks optimized for regulatory adherence (to be compliant) rather than designing systems explicitly to disrupt criminal networks.

Money laundering doesn’t directly damage the balance sheet in the way credit fraud or operational failures might. The risk is primarily regulatory: fines, consent orders, deferred prosecution agreements.

As a result, AML programs must balance two competing demands: meeting supervisory expectations while also stopping increasingly sophisticated financial crime.

This isn’t a criticism of compliance professionals or financial institutions. Banks are responsible for preventing illicit finance, and the people running AML programs are often deeply committed to that mission. But the scale of the system is enormous, and financial crime is evolving rapidly.

Regulators cannot audit every transaction, and banks cannot manually investigate everything that looks suspicious. The challenge isn't effort, it's efficacy: identifying the genuinely dangerous signals within vast volumes of financial activity.

That is where better fraud detection technology (like ours at Resistant) becomes critical, allowing institutions to be both compliant and effective in a fraud and money-laundering landscape that is innovating faster every year.

Regulators look for evidence that companies are making genuine efforts. For example, adapting controls to the risks in each geography, not just copying a playbook across markets. If a bank expands into Mexico but doesn’t adjust its controls, regulators treat it as suspicious, regardless of how thorough those controls may be.

And now, with the rise of APP fraud and other peer to peer scams, the effort level of institutions to prevent laundering has come under public scrutiny.

That’s the big shift:

The expectation is to demonstrate real vigilance. Laundering is no longer just about cartels, terrorists, or shadowy offshore networks.

It’s woven into the day-to-day transactions of every customer. Institutions need to show they’re aware of this and are actively fighting against it.

It affects everyday customers. The proceeds of scams (particularly APP fraud) begin with real people being deceived and losing their savings. Those funds still need to be moved, layered, and integrated (laundered) back into the financial system.

As a result, laundering isn't seen as an abstract compliance risk anymore, but as part of the same harm experienced by victims.

Mandatory reimbursement laws are one response, but many institutions have begun compensating victims even before being required to do so, driven by public outrage and reputational risk.

Pressure is mounting for them to confront money laundering directly: adapting controls, investing in detection, and treating financial crime as a core business risk, not a regulatory hurdle.

The first-party fraud problem

Alongside laundering, first-party fraud creates another financial crime baseline. This is when people lie under their own name for financial gains. For example:

- Inflating income on a mortgage application.

- Exaggerating damages for an insurance claim.

- Taking out a loan they never intend to repay.

It’s not usually organized crime, but it’s pervasive. 1 in 116 mortgage applications have indications of fraud, and insurance claims show even higher rates (10 - 20% in some cases).

The motivations fall into two camps: desperation and moral hazard. Someone in financial distress might misrepresent their earnings to secure a mortgage or falsify an insurance claim to get a payout they think they “deserve.” Others view the system as a loophole to be gamed. This is the fraud triangle at work (opportunity, pressure, and rationalization), combining to tip ordinary people into crime.

.png?width=1538&height=1025&name=fraud%20triangle%20(RAI).png)

Opportunity

Before, these deceptions were limited by friction. Forged documents required skill, access to equipment, or the nerve to face a banker in person. Today, new technology lowers the bar dramatically.

With nothing more than an application like Preview on Mac, anyone can edit a PDF, swap fonts, or adjust figures until they look credible. Gen AI can generate convincing fakes entirely from scratch (even more convincing when using a template) and code automations to drive hordes of fakes through onboarding systems.

No expert forger required. No 10 years of criminal expertise. The quality of these DIY manipulations is high enough to pass most initial checks, especially at scale.

Pressure

Couple that with external financial pressures from COVID, the following recession, and the opportunity provided by countless government programs (widely available and ripe for exploitation), and you have two of three ingredients for a fraud cocktail ready for brewing.

Rationalization

But what about the rationalization? A consumer who fabricates an insurance claim isn’t necessarily a hardened criminal; they’re likely rationalizing that they’ll “find a way to pay it back” later or that “everyone is doing it.” With the mass availability of fraud tactics today, that second argument only sounds more convincing.

First-party fraud, however, has a natural cap. It’s tied to an individual’s own name. There’s a ceiling on how far someone is willing to expose themselves. A person inflating income on a mortgage isn’t trying to disappear (they just want a better rate).

Key takeaway:

First-party fraud is fundamentally different from systemic organized fraud.

It’s not infinitely scalable per person, but it becomes damaging in aggregate because so many people are tempted to cross that line.

With the onset of COVID, that number only grew larger.

Covid-19: The great accelerator

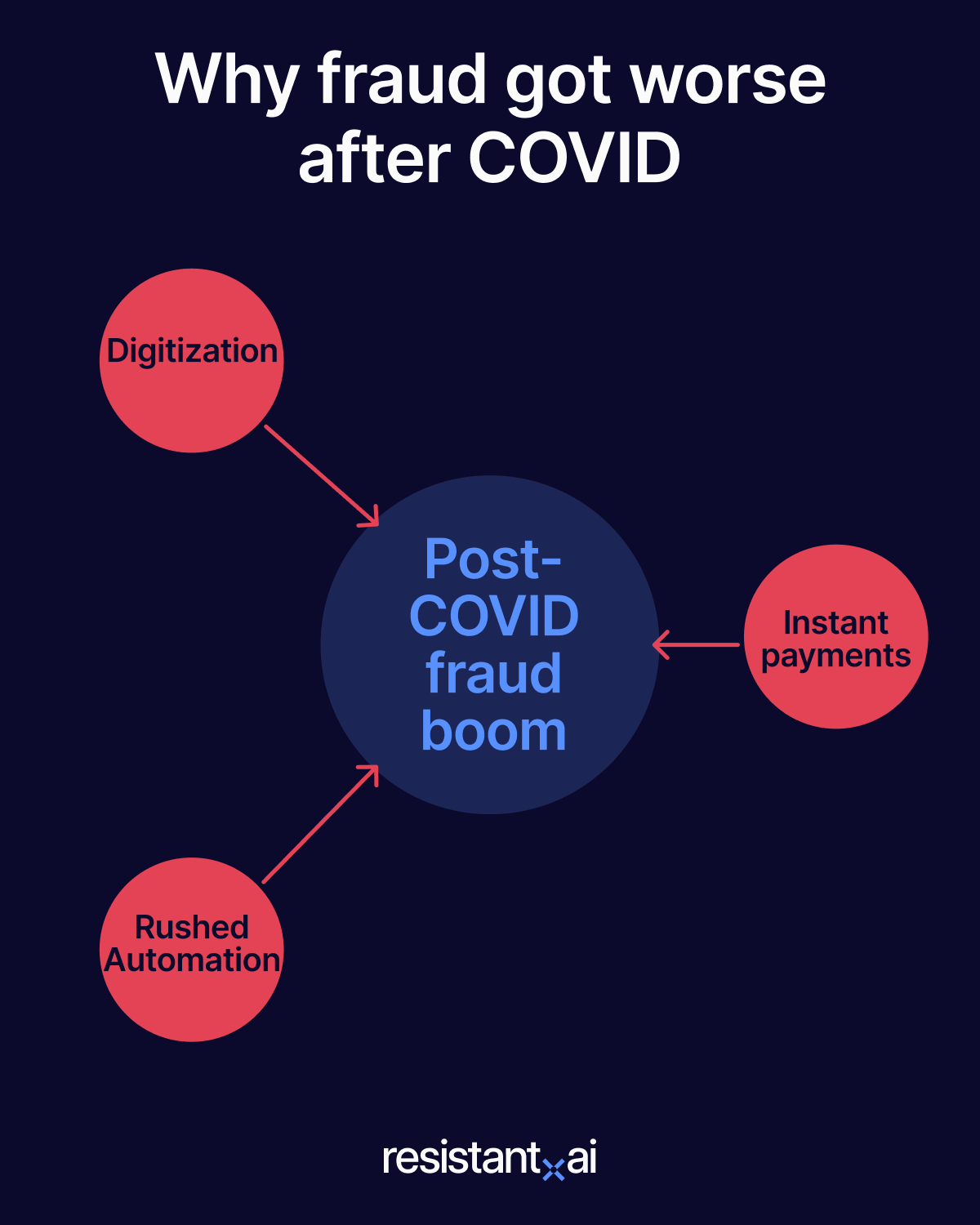

As we already mentioned (several times), experts can point to Covid-19 as the major catalyst for many shifts and evolutions in the fincrime space. However, the pandemic didn’t invent new types of fraud out of thin air. It rewired the environment in a way that multiplied every risk.

What had once been optional (digital shopping, remote onboarding, instant payments) suddenly became the default.

.png?width=1200&length=1200&name=Title%20slide%20(1).png)

.png?width=1200&length=1200&name=groceries%20(1).png)

.png?width=1200&length=1200&name=banking%20(1).png)

.png?width=1200&length=1200&name=work%20and%20payroll%20(1).png)

.png?width=1200&length=1200&name=government%20(1).png)

.png?width=1200&length=1200&name=merchant%20&%20business%20(1).png)

.png?width=1200&length=1200&name=payment%20infrastructure%20(1).png)

.png?width=1200&length=1200&name=identity%20verification%20(1).png)

Banks and businesses were forced to digitize processes overnight, while governments rolled out relief programs at unprecedented speed. Those same changes exposed cracks that criminals (and ordinary people under financial pressure) were quick to exploit.

Out of that mix came a surge of fraud that was bigger, faster, and more widespread than anything that came before. What was the result? An estimated $200 billion lost in the United States alone. And that’s just for government subsidies and assistance programs.

COVID didn’t just make victims easier to reach. It gave fraudsters (and a new wave of opportunists) the perfect testbed to abuse hastily built systems, from subsidy portals to streamlined account opening. Let’s look at how and why this happened:

When “digital optional” became the operating system

The pandemic flipped a switch. What used to be a convenience (ordering online, remote onboarding) became the only way to keep moving. Legacy institutions once rolled their eyes at neobanks for their remote onboarding expertise. Suddenly, they found themselves shutting their doors to the public while still needing to approve loans and open accounts.

Every business that treated the web as an add-on or an afterthought suddenly had to make it a priority. That surge in digital volume forced automation into places that hadn’t been ready for it, and the scramble left seams: document pipelines built in a hurry, risk checks deferred, controls copied from one channel to another without enough adaptation.

The result wasn’t just more online activity; it was a wider surface with fresh angles to probe.

This shift also raised the baseline of what a fraud team had to process. PDFs and images arrived in bulk. Identity proofing moved from counters to cameras. Liveness checks and “selfie with ID” flows reduced some risk but introduced new blind spots, especially for organizations that had to stand them up overnight.

Speed rewired the risk model

On top of the haste needed to set up these systems, they faced a new challenge: the speed of operations.

Under the old clearing system, money moved in days. Fraud could be spotted mid-stream and stopped. Instant rails made that window disappear. The same scams that existed for decades suddenly operated at a new tempo: once a payment left an account, it was already bouncing through mules and often unrecoverable by the time anyone reported it.

Speed also changed the texture of familiar threats. A scam like a business email compromise no longer needed a sophisticated breach; a supplier address with one letter off (a swapped “o” for a “0,”) and a convincing invoice could move funds before a bank or a buyer had time to sanity-check the destination.

.png?width=1575&height=1063&name=phish%20email%20(1).png)

For a singular attack this might not pose much of a threat, but add automated mailing lists and invoice generators into the mix and suddenly you’re dealing with millions of tiny mistakes to spot.

This increase in speed can be attributed (at least partly) to instant payment/transfer technology, the immediate transfer of funds from one authorized account to another.

The technology that enables instant payments (real-time payment rails) was already being piloted or partially rolled out before COVID in many regions (e.g., Faster Payments in the UK has been live since 2008, SEPA Instant in Europe since 2017, and the U.S. had launched Zelle in 2017 and RTP in 2017).

Despite their presence in many markets, Pre-COVID usage was uneven and often treated as a convenience channel. When the pandemic hit, branches closed, and face-to-face banking was off the table, both businesses and consumers needed a way to move money without delay.

Governments were also racing to distribute relief funds quickly and real-time rails offered the fastest path. What had been an optional convenience became an operational necessity almost overnight, creating a massive shift in how money flowed and making fraudulent transactions even harder to stop.

The label changes by market (APP scams in the UK and Europe; real-time payment, Venmo, or Chime fraud in the US) but the mechanics stay consistent: fraud that is simple to execute, easy to scale, and nearly impossible to unwind.

It doesn’t require technical skill or deep intrusion (just persuasion) and, once the money moves, it clears instantly, leaving no time for banks to manually intervene (but plenty of time if you have our solution).

Reporting stays thin, pressure mounts, and the absence of that two-to-three-day buffer makes everything harder to track. With the right mule network, a relatively simple crime becomes repeatable and much harder to defend.

That’s why, today, some teams have begun intentionally slowing certain transactions to create a catch window. But this is more of a bandage than a cure.

Automation under pressure

So, transactions were moving faster, everyone needed to automate, and that scramble to automate during lockdown also created predictable gaps.

- Legacy institutions. Needed to catch up to neobanks: their processes were more thorough on paper, collecting all documents upfront before onboarding (ID, proof of address, bank statements, etc), but they weren’t designed for digital-by-default onboarding at scale.

- Neobanks. Try to be more efficient with their checks, escalating only when withdrawals or larger transactions appear, This helps preserve frictionless onboarding, but can expose them to coordinated abuse: from repeated low-value fraud to organized account farming and mule networks operating at scale.

Neither approach is inherently wrong, but both created seams that criminals could exploit.

To cope with the flood of digital applications, banks and fintechs turn to new tools. Human reviewers can no longer accommodate the massive workloads. Intelligent document processors (IDPs) were rolled out to handle the sheer volume of files, while liveness checks and even “hostage selfies” (holding your ID next to your face) became standard.

These automations made onboarding faster and more scalable.

Fraudsters adapted just as quickly. Early attacks were crude: swapping faces on ID images, pasting data into templates. Many humans could still spot these tells, but humans weren’t reviewing every case anymore.

When ill-designed systems offered detailed rejection reasons (why a document failed, what to fix) criminals iterated, improving their assaults to meet submission requirements. Some even learned to use manual escalation intentionally, triggering a human reviewer they could attempt to social-engineer.

For example:

A fraudster targets the account-recovery process of a marketplace. Automated systems may block unfamiliar devices or locations, making direct login difficult.

Instead, the attacker moves through the recovery process and selects options like “I no longer have access to this phone,” which escalate the case to a human support queue.

From there, armed with publicly available business details and a rehearsed urgency narrative, they attempt to persuade a support agent to override safeguards.

The larger issue wasn’t just how fast these systems were built, but how much confidence was placed in their technology. For example, liveness checks seemed like a silver bullet… Until deepfakes made them obsolete. Every new safeguard became a challenge for criminals to overcome, and every iteration got faster.

The pattern that emerges from all of this isn’t just “more fraud.” It’s a new geometry. Digitization expanded targets, instant payment rails removed time, and rushed automation created seams.

Once those three conditions existed together, industrialized abuse was inevitable and it didn’t take long for organized groups to treat these flows like a system to be tested, mapped, and scaled.

Subsidies and BNPL: Examples of exploitation

A fast moving, hastily automated, and exclusively digital world created a hotbed for fraud activity.

But what systems got exploited first?

Government subsidies and buy now, pay later companies were some of the first to shoulder the burden. They were supposed to stabilize households and provide relief. They also trained more criminals and created an appetite that didn’t go away.

Subsidies

The theft of pandemic subsidies ran to vast numbers in both the US and globally — enough to sit alongside money laundering as one of the only categories that can claim that kind of magnitude. People fabricated businesses, stacked paperwork, and learned how to make digital systems say “yes.”

BNPL

Buy now, pay later (BNPL) rode the same wave. Instead of requiring the same credit history and application rigor as traditional loans, BNPL services offered small, short-term financing almost instantly. You could split a $200 purchase into four payments without a credit check, which opened the door to people who might otherwise be locked out of traditional credit systems.

COVID made People’s pay stubs lighter and it was readily available relief for many families. However, this same desperation made attractive targets for fraudsters. The playbook was simple: spin up identities, build “credit” with small purchases, push for a larger ticket, disappear.

Fraud detection

The difference between manageable loss and ballooning liability came down to detecting these fraud patterns on the first or second transaction, not the fifth. It’s one problem when a criminal realizes a crime is possible, it’s much worse when they realize it’s repeatable.

Exploiting services like this may seem like a first-party fraud problem. However, COVID also changed the way criminals operate, giving way to organized rings that both enact fraud at scale and better enable individuals with advanced tactics and resources.

What started as individuals exploiting COVID-era gaps quickly industrialized. Fraud rings built the infrastructure to scale those same tricks into repeatable processes.

From lone actors to professional digital fraud rings

As we can see, COVID impacted financial crime and fraud by expanding opportunities for everybody. Some of the groups who took advantage of those opportunities were sophisticated criminal operations.

The “fraud office” image (rows of cubicles operating like a telemarketing floor in high-crime regions) didn’t vanish under lockdowns; if anything, its methods spread.

Investigations into scam hubs in India have described operations run like call centers: workers making back-to-back calls from scripts and juggling multiple victims at once. The industry is constantly recruiting, with high turnover as newcomers learn the playbook and sometimes spin up their own schemes.

These offices were already operating remotely across borders long before the pandemic. When lockdowns normalized remote work, many individuals simply followed the same model.

Still, it’s important to note that these operations didn’t replace individual fraud. There’s just as many amateur or “everyday” fraudsters now as there ever was. Even more perhaps. First-party fraud didn’t disappear. If anything, the opposite happened.

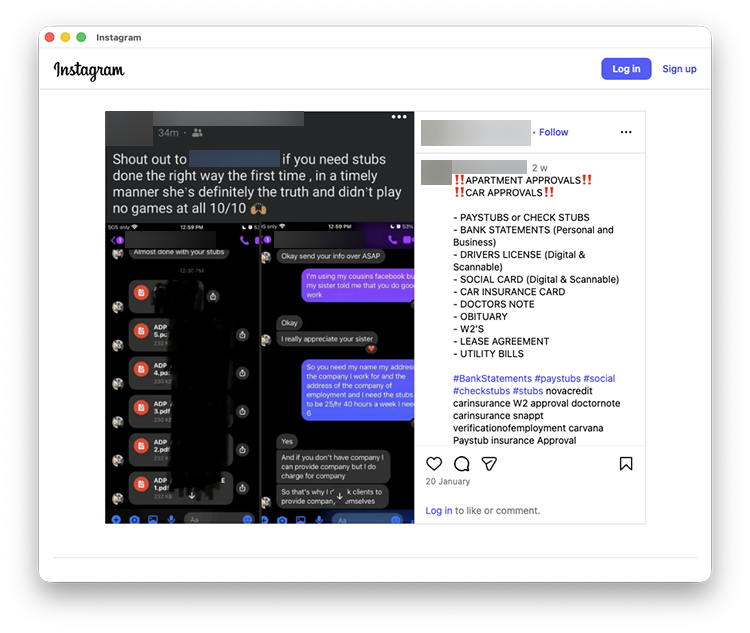

Organized crime groups have risen on top of that foundation, systematizing fraud and providing infrastructure (template farms, pre-authorized account selling, hackers-for-hire) that makes it easier for less sophisticated actors to get involved.

The ecosystem today is layered: individuals still committing opportunistic fraud, and professional networks turning that activity into a scalable, industrialized market.

Fraud scales into an ecosystem

Once upon a time, fraudsters had to figure things out alone (digging through underground forums or hacking guides) to piece together their attacks. Post-COVID digitization has changed that.

Social media, encrypted chat groups, and forums made it possible to share tools, outsource expertise, and coordinate in real time.

A simple “Chat, I need this code” message can link one fraudster to a specialist halfway around the world.

The result is an ecosystem that looks less like lone wolves and more like startups. Professional fraud rings recruit specialists, outsource tasks, and build technology stacks that rival legitimate businesses. Some operate as tight-knit groups, others share tools loosely, but all treat fraud as a repeatable process rather than a one-off gamble.

They operate in low regulated regions with minimal enforcement, sometimes as self-sufficient entities (ignored by law enforcement), other times as mechanisms of the government itself to bypass international law (i.e., more merchant onboarding in places like Indonesia and the Philippines to work around tariffs in the U.S.).

These rings are a global issue, not tied to one jurisdiction, but they thrive in environments within these more lenient contexts.

Once organized, their assaults can be devastating. A single fraudster with access to this industrial infrastructure (template farms for forged documents, stolen identity bundles that include full financial histories, pre-authorized banks and payment account selling, and ready-made mule networks) is exponentially more dangerous than a hundred uncoordinated actors.

And fraud rings have realized this in terms of profitability. It’s a lot simpler (and less risky) to sell an account (or the documents needed to open one) to someone else than use it to commit fraud yourself.

This is what we mean when we say first-party fraud hasn’t disappeared. These professional groups give ordinary people new resources. Individuals who once had to carefully fake their way through applications can now access these tools with a simple Google search.

That makes everyday fraudsters more dangerous, because they don’t need extensive training or infrastructure. The ecosystem does that for them. In doing so, they multiplied the scale and speed of attacks in ways that would have been unthinkable even a decade ago. Industrialization turned quantity into force.

Why scale makes the difference

One person with a hundred accounts can scale faster and hit harder than a hundred people with one account each. On the first-party level, you can only submit so many claims, forge so many documents, or open so many accounts before you’re eventually caught.

A fraud ring can generate fifteen (or more) fake identities, wire them together with scripts, and run them simultaneously with no single person to shoulder the risk or be held accountable. They can probe weaknesses in onboarding flows en masse and adjust until they find what works. Once an approach gets through, it’s templated, parameterized, and replayed.

Why scale matters:

Industrialized fraud is harder to detect, harder to contain, and vastly more damaging.

Instead of a single gap giving way to a singular attempt that’s spotted and fixed, scammers can drive a busload of fakes through that gap before an institution is any the wiser.

Patterns blur under volume, accountability diffuses across networks, and the speed of exploitation means that any human-based defenses are always playing catch-up. And this only accelerates as tools improve and infrastructure gets cheaper.

Once fraud behaves like an AI-enabled organization, it targets other organizations’ automation with the same logic: probe inputs, measure outputs, and scale whatever gets through. To keep up, organizations have to implement more advanced automation and technology. That’s where the “automation vs. automation” era begins.

Automation’s double edged sword

At scale, automation is unavoidable. Institutions must process thousands of documents a day, far beyond what manual reviewers can handle. We already covered the fact that hasty automations can leave security gaps.

That’s why humans still have a role in most security workflows — two roles, in fact:

- Operational (in-the-loop) defense. Focusing not on repeatable patterns (which should be automated) but on novel or ambiguous cases where creativity and intuition are required.

- Strategic (on-the-loop) defense. Reviewing patterns across the organization (if four mule accounts appear in a week, what do they share? What did the automation miss?) These meta-level insights feed back into stronger controls.

The main problem isn’t always that automation is imperfect, it’s that it’s treated as if it is perfect. Customers expect it to work seamlessly; institutions expect it to handle volume without fail and deliver on expectations. That trust creates complacency.

Criminals understand this, and instead of trying to “hack” systems, they walk through the front door, manipulating processes to their advantage. And when automation fails, it fails at scale.

The transition into AI makes this worse. Where fraud rings once needed months to develop new tactics, AI tools compress innovation cycles to days. That pace of iteration means defenders are always reacting, rarely ahead of the danger.

AI supercharges fraud

Institutions automating their workflows created the surface; AI supercharged the attack. What began as opportunistic exploitation of digital workflows has become a cycle of rapid innovation where criminals use the same tools as defenders (but faster because of different risk profiles and loss models) in a constant game of cat and mouse. For example:

- Large language models. The same tools that every business is attempting to harness for their workflows can generate convincing documents, craft realistic synthetic identities, or commit hundreds of onboarding attempts at speed.

- Deepfake tools. Can now spoof liveness checks or produce higher-quality onboarding selfies.

Criminals can operate these tools from unreachable jurisdictions, pulling off attack after attack with little pushback. The impact is not simply more fraud, but faster fraud.

Every time a control is introduced, a new counter-strategy can be developed almost immediately. That’s why the “cat and mouse” dynamic has become more urgent: defenses and attacks iterate on the same timescale, and criminals no longer need months to catch up.

Again, over confidence could be the underlying issue. There’s a striking double standard in how institutions approach AI. Product leaders often complain about a minimal false positive rates in fraud detection (calling them unacceptably high, because they inconvenience real customers).

From the fraudster’s perspective, tolerance for failure makes perfect sense: they don’t care if their AI-driven process doesn't succeed a third of the time, as long as enough attempts make it through.

Key takeaway:

Defenders must maintain extremely low false positives to preserve trust and customer experience, while attackers only need a tolerable level of success.

AI tips the balance because it amplifies what criminals are already comfortable with: iteration, diversity, and brute scale.

Robotic money mules: Synthetic ghosts

A great case study for these fraud innovations is the robotic money mule. Criminals once had to recruit people to commit crimes: risking exposure, relying on someone else’s cooperation, and leaving a loose end. Now, they can spin up fleets of AI-driven accounts.

Robotic money mule:

An automated or semi-automated account or digital entity used to receive, transfer, or withdraw illicit funds on behalf of a criminal network, performing the function traditionally carried out by human money mules.

Instead of one lone account, you now have diverse (and fully automated) account networks controlled by lines of code. These “ghost mules” don’t exist, don’t get arrested, and can be multiplied endlessly. If one is caught, there’s no loss; another can be generated (or bought from a pre-verified account seller) in minutes.

A scam that once required persuasion and social engineering can now rely on pre-scripted identities that receive, move, and obfuscate stolen funds automatically. With AI, robotic agents fill gaps faster and at greater scale.

And the gaps aren’t always in your onboarding.

Marketplaces and embedded finance have created an interconnected system with plenty of “side doors” where security might not be as advanced…

Marketplaces, embedded finance, and fragmented responsibility

The rise of online marketplaces and embedded finance has stretched the fraud surface further than ever before. These models thrive on convenience: reducing friction, expanding liquidity, and connecting more people to more services.

However, by spreading risk across multiple parties, they also make it easier to find a gap in security and harder to know who’s really responsible when things go wrong.

More players, more surface

A single transaction on a marketplace can touch half a dozen entities: the seller, the platform, the fintech powering payments, the bank providing rails, and any financing partner offering credit.

Each one has a piece of the risk, but no one has the full picture. A bank may be secure, but the marketplaces it works with might not have the same defenses. Their business models differ. One is all about faster onboarding, fewer checks, and seamless payments. But those same qualities are exactly what criminals look for.

And once money moves through that channel, it enters the formal system. This creates a kind of side door into banking, where criminals can pass funds through weaker links in the chain.

Historically, marketplace activity was often treated as a relatively low-risk signal: legitimate platforms processing real transactions between buyers and sellers. But that trust has been increasingly exploited.

Fraudsters now abuse marketplaces directly—through fake listings, account takeovers, or seller fraud—and indirectly as laundering rails, cycling illicit funds through seemingly legitimate transactions. In both cases, the marketplace adjacency that once signaled trust has become another point of entry into the financial system.

When fraud happens this way, which party is expected to spot it? The bank, with its license and regulators? The marketplace, with its seller relationships? Or the fintech, which processed the payment?

In most cases, no single entity has both the relationship with the customer and the visibility into the full transaction. That leaves attackers free to exploit the seams between them with little insight on how to prevent the next attack.

Examples are everywhere. A platform like eBay or Carvana connects individuals and businesses directly, often adding credit or financing options to help transactions move faster.

That makes them de facto financial intermediaries, even if their core business isn’t banking. Your bank enforces strict compliance checks, but smaller shops operating on these platforms can become laundering vehicles:

It’s much harder to move illicit funds directly through Chase than through a mountain bike shop in Idaho that exists only as an entry in a seller registry.

This is where KYB (know your business) becomes crucial. Fraudsters don’t even need to take over legitimate companies anymore; they can create shell merchants to act as pass-throughs.

In the embedded finance ecosystem, those shells can interact with banks and payment rails without ever being directly scrutinized.

Building a defense that evolves as fast as fraud

The evolution of fraud is clear: what began with the well known foundations of money laundering and first-party fraud has scaled into industrialized criminal rings, automated assaults, and AI-powered tactics. Each wave has made fraud faster, more accessible, and harder to stop.

That’s why the old approach (checking compliance boxes or relying on point-and-shoot solutions) simply isn’t enough. Criminals are adapting at startup speed. Defenses need to evolve just as quickly.

Conclusion

At Resistant AI, we call this Defense in Depth: a layered strategy that combines document fraud detection with transaction monitoring and behavioral analysis. Instead of protecting one door, we build a system of overlapping barriers that makes it exponentially harder for criminals to slip through unnoticed.

If you want to see how a layered defense can strengthen your organization against the next generation of fraud, scroll down and book a demo.

Frequently asked questions (FAQ)

Hungry for more evolution of fraud content? Here are some of the most frequently asked evolution of fraud questions from around the web.

How has document fraud evolved in the digital era?

Document fraud has shifted from manual forgery to scalable digital manipulation. Criminals use editing software, synthetic identity data, and AI tools to fabricate pay stubs, bank statements, invoices, and certificates of incorporation filings that pass superficial checks.

As onboarding and underwriting moved online, document verification became a primary attack surface.

Resistant Documents can perform forensic analysis of document structure, metadata, and internal consistency to spot signs of fraud the human eye can’t see.

Why are real-time payments increasing fraud risk?

Instant and real-time payment rails reduce friction for legitimate users but they also reduce the time available to detect fraud. In schemes like authorized push payment (APP) fraud, victims willingly transfer funds, and once the transaction settles, recovery becomes difficult. The speed of modern payment infrastructure compresses investigation windows and enables rapid layering of illicit proceeds.

Resistant Transactions can detect suspicious payment flows before funds disappear.

Does AI create new types of fraud, or just scale existing ones?

AI rarely invents entirely new categories of fraud. Instead, it removes friction from existing tactics. Large language models can generate convincing phishing emails at scale. Image tools can produce realistic synthetic documents. Voice cloning enables impersonation scams.

The core fraud typologies remain the same (identity deception, social engineering, document manipulation) but AI dramatically increases their speed, quality, and volume.

Why did fraud surge during and after COVID-19?

The pandemic accelerated digital adoption across banking, government services, commerce, and onboarding. Processes that once involved physical presence (account opening, subsidy applications, merchant onboarding) moved online almost overnight.

Criminals didn’t invent new crimes; they exploited newly digitized systems operating at unprecedented scale and speed. Pandemic-era relief programs exposed structural weaknesses that continue to affect fraud patterns today.

What is the difference between fraud, money laundering, and financial crime?

Fraud is the act of deceiving someone to obtain money, assets, or data. It generates illicit proceeds.

Money laundering is the process of moving and disguising those illicit proceeds to make them appear legitimate.

Financial crime is the broader category that includes both fraud and money laundering, along with related offenses such as bribery, corruption, sanctions evasion, and terrorist financing.

Why do fraud systems struggle with false positives?

Fraud detection systems operate under a constant trade-off: reducing false negatives (missed fraud) without increasing false positives (legitimate customer friction). Even a one percent false positive rate can impact thousands of real users at scale. Institutions must balance precision, speed, and customer experience when considering solutions.

Any document. Anywhere

Fraud awareness, examples, and lessons

Learn more about fraud in specific industries, best practices, and targeted documents.

View all

In 2026, fake bank statements aren’t being built in Photoshop. Most fraudsters are taking the easier route: AI ...

Starting a business in Florida can be a tax dream (a dream that just eliminated most local business taxes). It can ...

In January 2026, the Arizona Corporation Commission launched Arizona Business Center, a new online filing portal ...

No one wants to check documents one by one forever. Just look at Suncoast Credit Union in Florida, they were able ...

In 2026, the US KYB landscape is undergoing some dramatic changes. FinCEN just announced some adjustments to the ...

Cities in Washington state are constantly reminding businesses they need a valid business license and city ...

Keep yourself informed. Subscribe to our newsletter.

Be the first to know about releases and industry news and insights.